Wald AI DLP

Monitor and protect AI usage directly at the endpoint.

Trusted by

Regulated

Organizations

Govern Every AI Interaction

With Confidence

Beyond Pattern Matching

Locally installed Small language model (SLM) understands context before flagging, catching what regex rules and pattern-based models were never designed to see.

Zero Alert Fatigue

The lowest false positive and negative rates in enterprise AI. Context-driven classification means policies only fire when they should.

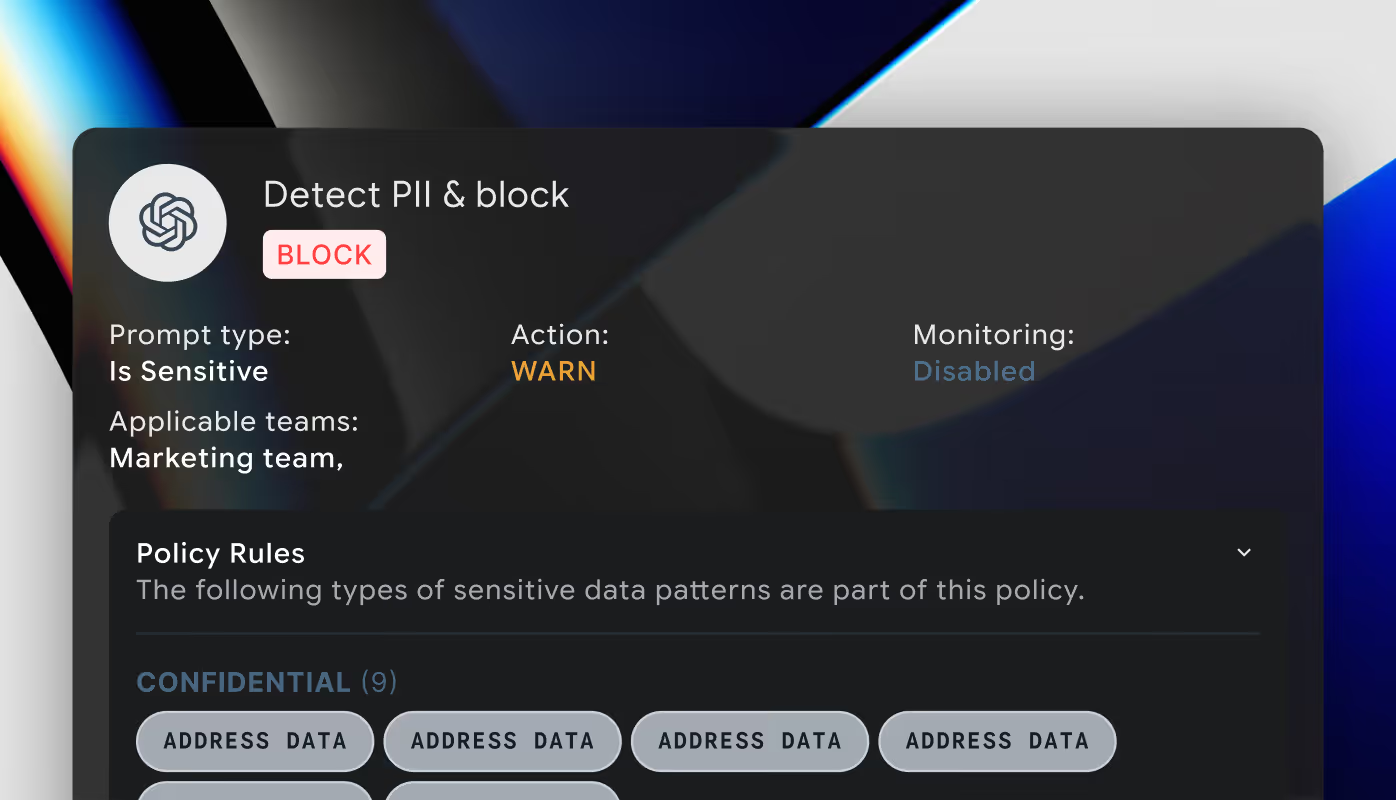

Flexible Policy Enforcement

Four actions, one policy engine. Allow, monitor, warn, and block across devices, browsers, data types, teams, and AI apps. Set it once, enforce everywhere.

Eliminate Shadow AI Risks in Three Steps

What is On-Device DLP for AI?

On-device DLP (Data Loss Prevention) is a security approach that detects and classifies sensitive data leakage directly at the endpoint without sending information to external servers.

Wald's on-device DLP provides:

- Local DLP model with no user visible latency

- Complete visibility into all AI interactions

- Policy enforcement at the endpoint

- No network exposure of sensitive data

What This Means for Your Organization

Meet data residency requirements. Ensure GDPR/CCPA compliance. Pass your next audit with flying colors.

Mitigate shadow AI risks by proactively observing and mapping how employees interact with AI, in real time.

Let teams leverage AI at full speed. No more choosing between innovation and security.

Prevent data breaches before they happen. Protect your reputation and bottom line.

Maintains compliance with:

Frequently Asked Questions

Contact UsHow does on-device DLP differ from traditional DLP solutions?

Traditional DLP solutions typically operate at the network level, inspecting data as it passes through gateways. This approach means data is sent to external servers. Wald's on-device DLP operates directly on the endpoint, inspecting all AI interactions locally while they're sent to AI platforms.

Can Wald’s AI DLP detect all types of sensitive data?

Yes. Wald’s AI security agent is designed to detect and classify sensitive information with high accuracy. Unlike tools that rely only on regular expression matching, it understands context and intent, which means it can identify sensitive content even if no explicit marker like a name, email address, or ID number is present.

Out-of-the-box, it recognizes PII (Personally Identifiable Information), PHI (Protected Health Information), intellectual property, source code, and can also be adapted for custom data types unique to your organization. This ensures protection not just against obvious risks, but also against subtle data exposures hidden in conversations or documents.

How does AI data observability benefit my organization?

AI data observability provides complete visibility into how AI tools are being used across your organization. This allows you to identify patterns of sensitive data sharing, understand AI usage trends, and make informed decisions about AI governance policies, all while maintaining compliance with regulations like GDPR and CCPA.