AI Data Loss Prevention (AI DLP): Why Your Traditional DLP Tool Can’t Stop GenAI Data Leaks

Table of Contents

Picture this: Your finance analyst copies a sensitive revenue forecast into ChatGPT for a quick summary. Your legal team pastes contract language into Claude to speed up review. A developer drops internal source code into an LLM to debug faster.

None of them meant any harm. They’re just trying to work smarter, not harder. But here’s the thing in each of those moments, your company’s most sensitive data just walked right out the door. Silently. Invisibly. And your traditional DLP solution? It didn’t catch a single one.

Welcome to the new reality of enterprise data security. This is exactly why AI Data Loss Prevention (AI DLP) has become the most critical security conversation your organization needs to have right now.

What is Data Loss Prevention (DLP)?

Data Loss Prevention (DLP) refers to technologies and practices designed to detect and prevent sensitive data from being exposed, misused, or transferred outside authorized boundaries. DLP systems understand where your data lives, how it moves, and who can access it then enforce policies that reduce the risk of unauthorized data exposure.

For years, that meant monitoring email attachments, blocking file transfers to USB drives, and scanning network traffic. Traditional DLP prevented data from leaving through common channels like email, file transfers, or removable media.

When data stayed mostly on internal networks and endpoints, that model made sense.

But today? That model is dangerously outdated.

The AI Data Loss Crisis: By the Numbers

This isn’t theoretical risk. The statistics are alarming:

77% of enterprise AI users have been copying and pasting sensitive data into AI chatbot queries, according to a LayerX study. Sensitive data now makes up 34.8% of employee ChatGPT inputs up sharply from just 11% in 2023.

Generative AI tools have become the leading channel for corporate-to-personal data exfiltration, responsible for 32% of all unauthorized data movement.

Nearly 40% of uploaded files contain PII or PCI data, while 22% of pasted text includes sensitive regulatory information.

71% of security leaders are concerned about data leaks via GenAI and LLM applications yet most organizations still lack the tools to stop it.

69% of organizations cite AI-powered data leaks as their top security concern in 2025, yet nearly 47% have no AI-specific security controls in place.

The threat isn’t theoretical. It’s happening right now, in your organization, on your employees’ browsers as you read this.

Why Traditional DLP Tools Fail at AI Data Loss Prevention

Your legacy DLP solution was built for a world of on-premises data, predictable workflows, and static policies. Today’s reality is completely different.

Traditional DLP Was Built for Files, Not AI Prompts

Legacy DLP relies on static rules and pattern matching searching for credit card numbers with regular expressions, for example. But when an employee pastes source code into ChatGPT, there’s no file involved, no email sent, and no policy violated in the traditional sense.

From the DLP system’s perspective, nothing happened.

The problem? Sensitive data now travels through browser-based AI prompts and most legacy DLP tools are completely blind to this channel.

Traditional DLP Can’t Understand Context

Traditional DLP tools use defined policies and detection techniques like regex (regular expressions) to identify sensitive data. The main issue lies in their reliance on REGEX a search tool that uses specific characters to identify patterns in text.

While REGEX works well with structured data, it struggles to detect sensitive information in unstructured formats.

Consider this: A paragraph about an M&A deal might not trigger any regex rule, but it’s still deeply confidential. A financial projection described in conversational language. A patient’s treatment plan written as notes.

None of these match a pattern. All of them are sensitive.

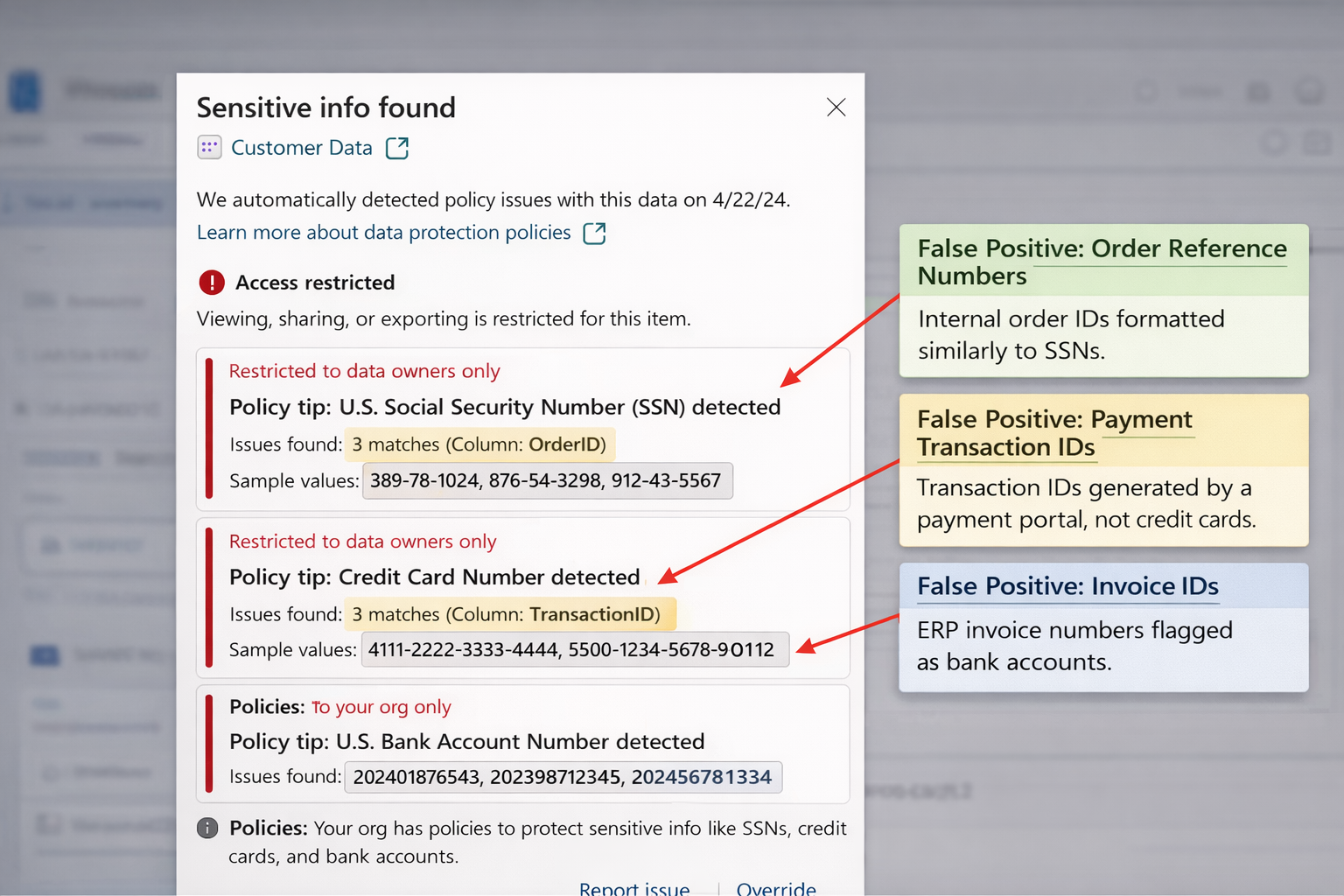

Below is an example of a traditional DLP system flagging data purely based on pattern matches. These values resemble SSNs, credit cards, and bank accounts, but in reality they are just operational identifiers like order IDs and transaction references.

Legacy DLP Creates Noise, Not Signal

Traditional DLP doesn’t just miss threats it floods security teams with false alarms.

92% of enterprises say that reducing DLP alert noise is “important” or “very important.” Legacy DLP systems, which rely on static regex rules and keyword matching, generate an overwhelming number of false positives that waste valuable time and resources.

On average, organizations now use six different DLP solutions cobbled together across endpoints, email, cloud, and networks yet data leaks persist.

72% of enterprises find DLP administration and maintenance “challenging or very challenging.”

Traditional DLP Can’t See Browser-Based Data Loss

70% of enterprise data leaks now happen directly in the browser making them invisible to endpoint or network-based DLP tools.

53% of these leaks involve copying data into chat applications or AI prompts, a behavior that traditional tools simply cannot monitor.

Employees interact with AI tools directly through web browsers, and data flows through copy-paste actions, API calls, and third-party integrations. Many of these interactions don’t involve file transfers at all.

Legacy DLP Has No Concept of GenAI Risk

GenAI models don’t just store or transmit data they transform it. Traditional DLP struggles in GenAI environments, where language-based transformations like summarization, paraphrasing, and translation introduce entirely new risks.

Leaks happen through language that traditional pattern-matching tools simply can’t catch.

Real-World AI Data Loss Prevention Failures

The risks aren’t hypothetical. Here’s what’s already happening in enterprises around the world:

Samsung engineers leaked confidential source code while trying to fix errors using ChatGPT in 2023 leading Samsung to temporarily ban all employee ChatGPT usage.

JPMorgan Chase restricted employee access to ChatGPT, fearing that even casual interactions could expose client data or breach compliance protocols.

Apple restricted ChatGPT use after employees began pasting snippets of internal product documentation and code.

In February 2025, a coordinated campaign compromised over 40 popular browser extensions used by 3.7 million professionals extensions that gained the ability to silently scrape data from browser tabs, including corporate sessions in ChatGPT bypassing traditional DLP filters completely.

Beyond individual incidents, insider-related incidents cost organizations an average of $17.4 million annually, with 55% of these incidents stemming from employee negligence rather than malicious intent.

Most employees aren’t trying to cause harm. They’re just trying to get their work done faster.

That’s precisely why AI DLP is so critical because most exposure comes from normal users doing normal work.

What is AI DLP (AI Data Loss Prevention)?

AI Data Loss Prevention (AI DLP) is a new generation of data loss prevention purpose-built for the era of generative AI and large language models.

Unlike legacy DLP tools, AI DLP solutions:

✅ Understand context, not just patterns They analyze what content means, not just what it looks like.

✅ Work in the browser in real time They monitor AI interactions as they happen, before data is submitted to an LLM.

✅ Detect PII, financials, and proprietary data semantically No regex required. They understand natural language.

✅ Enforce policies intelligently Allow, warn, or block based on data type, user role, or which LLM is being accessed.

✅ Coach users instead of just blocking them They educate employees at the moment of risk, not after the fact.

The most effective AI DLP solutions operate at a semantic level they understand meaning, not just patterns.

Modern AI DLP must understand language and context, support LLM workflows, and offer real-time visibility into how data flows not just where it sits.

How AI Data Loss Prevention Works: The Wald Approach

This is exactly the problem wald was built to solve.

Wald is an AI Data Loss Prevention platform that runs an on-device Small Language Model (SLM) directly on the endpoint. It monitors AI interactions in the browser in real time, detects sensitive data contextually not just via keywords or regex and enforces your organization’s AI policies before any data reaches an external LLM.

On-Device Intelligence for AI DLP

Unlike cloud-based DLP tools, Wald SLM runs locally on the endpoint. This means sensitive data is analyzed without ever leaving the device solving the privacy paradox of sending sensitive data to a cloud tool in order to protect it.

Smart Contextual Detection Beyond Traditional DLP

Wald doesn’t just scan for patterns it understands context. It can detect PII, financial data, source code, legal language, and proprietary business information even when it’s described conversationally, not in a structured format.

Here is a list of Data Classification Types from Wald.

Real-Time Browser Monitoring for GenAI Security

Wald monitors AI interactions in the browser as they happen. Whether an employee is using ChatGPT, Claude, Gemini, Copilot, or any other LLM, Wald is watching and enforcing.

Flexible Policy Enforcement for AI Usage

With Wald, your security team can configure policies to allow, warn, or block AI usage based on data type, user role, department, or specific LLM. It’s governance that moves at the speed of work.

Coaching, Not Just Blocking

Rather than simply blocking actions and frustrating employees, Wald coaches users at the moment of risk building a culture of responsible AI use instead of a culture of workarounds.

Private AI Assistant with Built-In Security

Wald also offers a Private AI Assistant and Secure LLM Access with built-in prompt sanitization so employees can still be productive with AI, just safely.

This matters especially in regulated industries like banking, healthcare, insurance, legal, and manufacturing where the cost of a single data leak can be catastrophic.

Why Your Organization Needs AI DLP Now

AI adoption is not slowing down. 78% of organizations reported using AI in 2025, up sharply from 55% in 2023.

91% of enterprises intend to increase their DLP spending over the next 12 months.

But simply spending more on traditional, outdated DLP solutions isn’t the answer. Organizations need real-time policy enforcement tools that can prevent sensitive data from being shared with AI models while allowing employees to continue leveraging AI for productivity.

The organizations that win in this environment won’t be the ones that block AI outright that battle is already lost.

They’ll be the ones that govern AI intelligently, in real time, at the point of risk.

That’s what AI Data Loss Prevention does. That’s what Wald delivers.

AI DLP Best Practices for Enterprise Security

Protecting your organization from AI-powered data leaks requires a comprehensive approach:

1. Implement AI-specific DLP controls that understand browser-based interactions and natural language prompts.

2. Monitor AI tool usage in real time across all LLMs your employees access, including ChatGPT, Claude, Gemini, and Copilot.

3. Enforce contextual policies that allow, warn, or block based on data sensitivity, user role, and business context.

4. Educate employees at the moment of risk rather than relying solely on annual training sessions.

5. Use on-device analysis to protect sensitive data without creating new privacy risks.

6. Provide secure AI alternatives so employees can remain productive without exposing corporate data.

7. Regularly audit AI interactions to identify patterns and refine policies.

Frequently Asked Questions About AI Data Loss Prevention

What is the difference between traditional DLP and AI DLP?

Traditional DLP uses pattern matching and regex to detect structured data like credit card numbers. AI DLP uses semantic analysis to understand context and detect sensitive information in natural language, including conversational prompts to AI tools.

Can traditional DLP tools detect data leaks to ChatGPT?

No. 70% of enterprise data leaks now happen directly in the browser, where traditional endpoint and network-based DLP tools cannot monitor copy-paste actions into AI chatbots.

How does AI DLP work in real time?

AI DLP solutions like Wald run on-device models that analyze prompts before they’re submitted to external LLMs. This allows real-time detection and policy enforcement without latency.

What types of sensitive data can AI DLP detect?

AI DLP can detect PII, financial data, source code, legal documents, proprietary business information, healthcare records, and other sensitive content even when described conversationally rather than in structured formats.

Is AI DLP necessary for regulated industries?

Yes. Industries like banking, healthcare, insurance, and legal face severe penalties for data breaches. Insider-related incidents cost organizations an average of $17.4 million annually, making AI DLP essential for compliance.

How does Wald protect privacy while analyzing sensitive data?

Wald runs its Small Language Model (SLM) locally on the endpoint. Sensitive data is analyzed without ever leaving the device, eliminating the privacy paradox of cloud-based DLP solutions.

Protect Your Organization with AI Data Loss Prevention

Your employees are already using AI. The question is whether you have the right controls in place.

See how Wald helps enterprises enforce AI policies without slowing their teams down.

👉 Visit www.wald.ai to learn more or request a demo.